BCN11 Wrap-Up

My Space Balloon presentation at BarCamp Nashville went well. I normally would 'wing' a BarCamp talk, showing only the pics/video or show-n-tell. But I realized earlier in the week that I had a lot to cover and only 35 minutes to complete it. I figured some organization of my material might be in order. So, those of you that were there got to witness my first ever keynote presentation. Woo hoo!

I had a number of people tell me that they missed it, due to collision with blocked-off roads from a footrace that morning or the 9AM timeslot. So, here is the presentation in PDF format, with movies removed: Space Balloon BCN11 Presentation. If you want to see the movies, they are linked on my blog here.

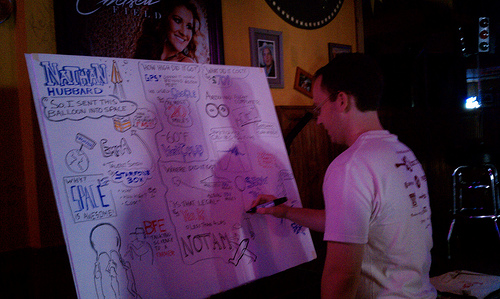

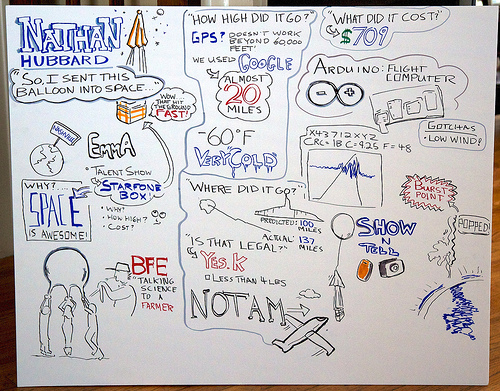

One other really cool thing that happened yesterday during the talk was Nick Navatta drawing a graphic of the presentation. Seriously cool. He even let me keep the end result, which I shot and put up on flickr.

Here is the full pic:

Nashville Amazon Web Services User Group

A few of us are putting together a local Amazon Web Services User Group here in Nashville. The initial idea is to follow a format similar to Portland's AWSUG. Invite local experts, give talks, get together and share stories, etc. Maybe host a yearly event in Nashville, not sure. These are all just ideas at the moment. AWS's most game-changing (and also complex) product is EC2 and I'm guessing that will garner a lot of interest. That's also my area of expertise and interest.

Scheduled for Wednesday, Sept 28th (2 weeks from yesterday), the first meet up would be an informal get together to come up with a simple charter, brainstorm on activities, come up with a list of potential venues and sketch out a general calendar for the next quarter. I figure this can be accomplished after work, across a table of drinks. Yeah?

If you'd like to join....we'll be at the Corsair Taproom from 6pm till about 8pm.

Nashville Amazon Web Services User Group (NAWSUG)

Initial Planning Meeting - Informal w/beer

Sept 28th 2011, 6pm-8pm at the Corsair Taproom

Corsair Taproom Location:

1200 Clinton Street #110

Nashville, TN 37203

615-321-9109

Map

Link

BarCamp 0b10

There's been a flurry of activity in Nashville about Bar Camp over the last 48 hours...and 5 years. I won't summarize because it removes the passion. If you care, go read them (referenced at the bottom).

So what do we (the Nashville community) do about it. Well, we act. Earlier today Tim Moses posted this:

I think there is room for an alternate BarCamp that answers your concerns without conflicting with the current BarCamp. BCN may not be true BarCamp format, but it is successful. It may not be tech-heavy, but that seems to be improving in large part by the BCN organizers. Making dramatic changes to BCN would most likely hurt it more than help.

The problem isn't with the existence of BCN or how it operates, but the nonexistence of a tech-focused BarCamp. Many BCN supporters have repeatedly suggested an alternate, but no one has stepped up to do it.

On that note, Nathan Hubbard (ex-Telalinker with true BarCamp experience), and I are organizing BarCamp 0b10, a tech-focused, 2 day, traditional BarCamp to complement BCN. The current plan is to space it far enough from BCN (maybe Spring) to not force a choice between the two. If you like both, go to both, present at both. If not, pick your BarCamp of choice.

We're shooting for a date sometime this spring and looking to things like this and people like this for inspiration and guidance. Initial thoughts are: traditional BarCamp, 2 days (camping!), complimentary to BCN, 'tech focused' but not tech only, simpler and less complex, less organized, etc. Tim and I will announce more via this blog and our twitter accounts (@timmoses, @n8foo) in the next few months. Keep your eyes peeled. Update: we have a new twitter account: @BarCamp0b10 and hashtag #0b10.

Right now, the Nashville community needs to focus on making BarCamp Nashville 2011 awesome. Go sign up. Go to make a difference. Go to see what it's like if you've never been. Don't wait on our event, go act.

References:

- Nashville Scene: BarCampNashville Descends Into Online BarFightNashville

- Chris Wage: The Not-So-Great Barcamp Schism

- Matt George: Clarification on BCN comments

- Twitter: BCN_Critic

- Countless posts on twitter by the Nashville BCN crew and other tech heavyweights.

- Bar Camp Nashville

- The Rules of BarCamp

- BarCampSD

Space Balloon Post Launch Wrapup

SPACE

The image above is a picture taken at 102,000 feet over Nashville back

in April, from our Space Balloon.

Yep, the balloon launch back in April was a success. As was the presentation that Marc, Jared and I did at Emma Talent Night. It was actually far better than I could have imagined. We kept the whole project a secret right up until the night of the show. For weeks, people were watching us tossing parachutes out windows and messing with flashing lights and cameras. It really built up some great suspense. By the time the night finally arrived for the big reveal of our 'talent', the audience was actually chanting "SPACE! SPACE! SPACE! SPACE!". It was epic, really, for a bunch of nerds to get up and talk about sending a balloon to space in the middle of 2 dozen other awesome music, singing, magic and other acts. And get cheered at during the process. While drinking. Truly one of the most awesome things I've ever done.

Enough gushing, down to the data.

This data was recorded by our flight computer, an on board micro Arduino with 2 different sets of 1-Wire temp and barometric sensors and a 3-way gyro.

Avg ascent rate: 4.89 m/s (10.9 mph)

Avg ascent rate first hour: 2.32 m/s (5.19 mph)

min temperature : -59.116 F

max altitude : 102,496 ft (19.4 mi)

min air pressure : 930pa (0.93% surface)

max descent rate : 245 mph

-59 F! Wooo wee that's cold! The 245 mph speed was with the chute open! See, the air is so thin up there that even with the chute open it was screaming towards earth. At more reasonable altitudes, it slowed down to a more lazy pace of 15 mph. The height we achieved was 102.5K feet. That's almost 20 miles! One of our other calculations shows 106k feet so it was in that range somewhere.

On to the videos and pictures:

This is what we showed at the talent show after we got up and gave our talk about the balloon, more of a quick documentary of the process. It always makes me laugh watching our first two parachute drop tests. Especially on the second one when Pamela laughs at us. Music courtesy of 84001 (my co-worker Jimmy's band).

This is the music video I put together for 84001, to be shown while they played live music on stage. It's various trippy time lapse and spacey stuff and about 10 minutes of balloon footage that starts around the 2:20 mark and the burst occurs at around 7:10...the music in this video is basically the same set they played live. The 3 cameras in the balloon filmed a total of 8GB of data across 3.5 hours, of which you are seeing less than 2% here:

More pictures, on Flickr with some video as

well:http://www.flickr.com/photos/n8foo/sets/72157625894886863/

The actual slideshow, complete with graphs and stuff, from the

Presenation: http://www.youtube.com/watch?v=R_aiON0-2J8

Thanks to Marc and Jared for making this a super kick ass fun science project. I can't wait for the next one. :-)

tcpdump web traffic

Want to tcpdump your web traffic for debugging? Here's the formula I've been using lately:

tcpdump -s 1514 -Ai en0 'tcp port 80 and tcp[((tcp[12:1] & 0xf0) >> 2):4] = 0x47455420'

Reddit Traffic

This is what it looks like when someone posts a link to your blog on reddit (linux subreddit). I went from 48 visits the previous day to 581 visits the day of the reddit post. More than an order of magnitude more visitors. I don't give a s**t about visitor count but as a graph and chart nerd, it's neat to see in the google analytics.

Side note: great discussion on reddit. I will be making a followup article about pgp + vim, since that seems pretty slick. The jury is still out on which is a better solution for me.

encrypted password vault with Vim + openssl

In a post last year, DIY Encrypted Password Vault, I showed a simple way to use OpenSSL to create encrypted text files. Since I'd need to de-crypt those files to edit them (usually with Vim) there would be an unencrypted temp file sitting around while I was editing. And using a filesystem with history meant they were around for a long time. BAD. Surely there is a better way...

Can we encrypt directly with Vim? Actually, yes...Vim has encryption built in (via the -x flag)...it works and it's simple. Problem is that it uses 'crypt', which is not terribly hard to break. Also, it leaves a cleartext .tmp file around while you're editing it. Which means it's worthless to me for a password safe.

Enter the VIM openssl plugin. This plugin will allow you to write files with particular extensions corresponding to the type of encryption you desire (ex: ..des3 .aes .bf .bfa .idea .cast .rc2 .rc4 .rc5) and it turns off the swap file and .viminfo log, leaving no tmp files around. Excellent! Here's typical usage:

Edit a new file with the .bfa extension:

$ vi test.bfa

Add your secrets and save it out. It will prompt you for a password (twice) to encrypt against.

blah blah blah : secrets of the world

~

~

~

~

:wq

enter bf-cbc encryption password:

Verifying - enter bf-cbc encryption password:

You can look at the data in the file to see the encrypted content:

$ cat test.bfa

U2FsdGVkX1+TPJBn3hsJ6nzsXzDvTXOxdDk1PkWkTDFG45HIvMnZbBNIrnJubPCY

EexmfIJpZqo=

To re-open a previously encrypted file, just open it with vi. The plugin automatically recognizes the extension and prompts for your password:

"test.bfa" 2L, 78C

enter bf-cbc decryption password:

Pretty slick! You'll need the openssl binary in your path for this to work, which is pretty standard these days. Here is a little script that I run to set this up on my various home directories:

#! /bin/sh

test -d ~/.vim || mkdir ~/.vim/

test -d ~/.vim/plugin || mkdir ~/.vim/plugin

curl "http://www.vim.org/scripts/download_script.php?src_id=8564"

> ~/.vim/plugin/openssl.vim

Edit: 2010+ versions of Vim have blowfish support. Excellent, forward progress! I'm probably not going to upgrade Vim on my Mac and all my servers just for this when a plugin can work. Good to see progress but for now, this makes the most sense for me.

Space Balloon One

My friend Marc and I started doing research on what it would take to send a balloon to 'near space'. We've been inspired by a few others, most recently the father-son team from the UK that sent an iPhone up to 100,000 feet. We think we can build this for under $200, probably less.

Things we know:

- 4 lb or larger payload requires FAA approval

- GPS ceiling limit is 11 miles

- Temps are -70F

- Winds are 150MPH

- good chance of a water/wet landing

- single balloon

The first launch will be to test the concepts and recovery mechanism. We have planned to use the instamapper service in combination with a t-mobile phone for ground tracking. We have a camera that would do the trick for the image capturing, using CHDK. Our friend has donated a cryogenic styrofoam box that should help with insulation and we can use hot-packs to keep it warm in there. Need some sort of LED light to help us in recovery after dusk.

We're also considering building a small data tracking device, for recording temperature, light and pressure. Maybe some other environmentals, not sure. Probably arduino powered, since that seems pretty easy and cheap.

Questions we have right now:

- how do we track it over 11 miles?

- what kind of balloon do I use?

- how do we deploy the parachute?

- what are we missing?

Would love to see some comments by fellow space nerds.

2010 Camera Advice

From some advice for a friend, looking to travel around the world, asking about cameras for travel.

I always say 'you should by a DSLR', as it's a quantum leap in abilities. The huge resulting change in your photos is worth it. Just know you that it's bulk may mean you don't have it out all the time, even unconsciously. Most likely, if you get one now, you'll carry it everywhere for at least some time and the pictures you'll have the rest of your life will be worth it.

From my own travel experiences: When you're walking around with a big camera and glass around your neck, the weight and size get to be a burden. So does the "I have an expensive camera" factor. I took my DSLR and a powershot with me on my moto trip...ended up using the powershot for 95% of the shots for those reasons. It was a cheaper cam too, so I would take equipment-risky shots...I dropped it a handful of times where my DSLR would have shattered and would hand it to anyone willing to take a shot. (http://www.flickr.com/photos/n8foo/3597617054/) There is also something to be said for having a camera that's easy to 'wear' all day and have ready to fire. With my DSLR setup, I find myself asking 'is this shot worth getting this thing back out?' all the time...and I miss opportunities due to it. Which means, instead of in my sling bag, I carry it around my neck/in my hands constantly, and we're back to the top of this paragraph. I have long considered picking up a G11 (G12 out now w/better low light) as my travel camera for these reasons.

Back to the DSLR - Assuming Canon 550D, I'd look at 3 lenses. The EF-S 18-200 IS, kick ass walkabout lens, I used it on my 7D when I don't know what kind of photos to expect (travel). 11x equivalent zoom so you can get wide shots and then zoom in on that wildlife off in the distance. The EF-S 17-55 2.8 IS is amazing. Nearly all my best shots have been taken with it. It's got L glass but isn't designated L due to the EF-S lineup. Downside is that it's kinda big. If you want that awesome DOF, pick up a Canon 50mm f/1.8 for $99...it's cheap plastic but will take good low light portraits. I personally opted for the Sigma 30mm 1.4 for my fast prime lens, but it was 4x the price.

Micro 4/3 has no viewfinder, suck. It's avail as a sep. accessory on most but then you're about as bulky as a DSLR. The E-P2 is the best of the breed of 4/3 so you can't really go wrong if you do go down that road. I'd be jealous of it. :-) I played with a Sony NEX-5 tonight at Target and I hated it's ergos and electronic focus ring. Shots looked pretty tho. If you're looking seriously at the 4/3 stuff, check the Canon G11/G12, those are amazing cams and have good ergos. The f/2.8 is plenty for low light, coupled with the IS and kick butt sensor (12800 ISO!).

Re: DOF - if you want more DOF, get further away from your subject and use the zoom. It tends to flatten the image but the DOF will be more dramatic than at closer ranges. You probably already know this.

One last comment: one of the best things you can get to make your photography better is a tripod or even a monopod. It'll make you compose your shots, makes them sharper and lets you leave the shutter open longer then 1/30th.

No matter what gear you buy, 'getting better at photography' is the right path.

network gear config management with tftpd and subversion

Keeping revisions and history on device configs is an essential part of a good change control process. I've found this to be an extremely useful and ass-saving part of system/network administration.

WARNING: Consider the security ramifications before you start a project like this. Access to network configs saved on a filesystem or code repository can reveal network topology and login information (some network gear passwords are easily decrypted). Be careful how and where you store this data. For my environment, the tftp server and SVN repository have restricted access to only the systems team.

Here's how I do it:

- tftpd server reachable from switch management network

- subversion repository for switch configs

- manually saving switch configs to the tftpd server

- cron'd script to automatically check in switch configs (if I don't do it myself)

Setting up TFTPd is pretty easy. On Ubuntu/Debian, it's simple:

apt-get install xinetd tftpd tftp

Set up something like this in /etc/xinetd.d:

service tftp { protocol = udp port = 69 socket_type = dgram wait = yes user = nobody server = /usr/sbin/in.tftpd server_args = /tftpboot disable = no }

Set up the various directories and start the daemon. Be sure of your permissions, as your switch configs will be written to these directories.

mkdir -p /tftpboot/netconfigs/ chmod -R 700 /tftpboot chown -R nobody /tftpboot /etc/init.d/xinetd start

Double check that you can write switch configs out using your network gear. This is what it looks like on a Cisco 3560:

copy system:/running-config tftp://TFTPHOST:/netconfigs/SWITCH.config

And on a Cisco PIX firewall:

wr net TFTPHOST:netconfigs/SWITCH.config

Now you need to automate this. I created a utility user on each switch and a utility user in my subversion repository. This is what my tftp_switch.sh script looks like for my Cisco 3560's:

#!/bin/sh DATE=\`date +%F\`SWITCHES='sw-1 sw-2 sw-3 sw-4 sw-5' USER=username PASS=password TFTPHOST="TFTPHOST" for SWITCH in $SWITCHES do (echo "${USER}" sleep 1 echo "${PASS}" sleep 1 echo "copy system:/running-config tftp://${TFTPHOST}://netconfigs/${SWITCH}.config" sleep 15 echo "exit" sleep 2 echo exit while read cmd do echo $cmd done) | telnet $SWITCH >> ~/cronlogs/${SWITCH}.${DATE}.log done

The 'sleep 15' is there in case it takes a moment to write to the tftp server.

I set up another script that runs the actions above, moves the files into the correct subversion tree, scrubs the files for strings that change too much (like timestamps or what-not) and then checks them into SVN. Here's my example:

#! /bin/sh # write out network configs to TFTP server /root/bin/tftp_switch.sh >/dev/null 2>&1 # copy them into the SVN tree cp -fv /tftpboot/netconfigs/*.config /root/svn/network/ # remove things that change all the time sed -i "s/ntp clock-period.*/ntp clock-period/g" /root/svn/network/sw-*.config sed -i "s/Written by.*/Written by/g" /root/svn/network/sw-*.config # check them in with subversion cd /root/svn/network ; \\ svn add -q *.config ; \\ svn commit -q -m 'automatic checkin'

I set these files owned by root, mode 500 and set it to run nightly in cron.

Since everything is now stored within SVN, I can checkout and in configs, see who and when they were saved (depending on if your gear writes that in the output) and compare to previous versions. I run WebSVN on my repo so it's very easy to see what has changed. Super useful.

If anyone implements this and has suggestions for change, please let me know!